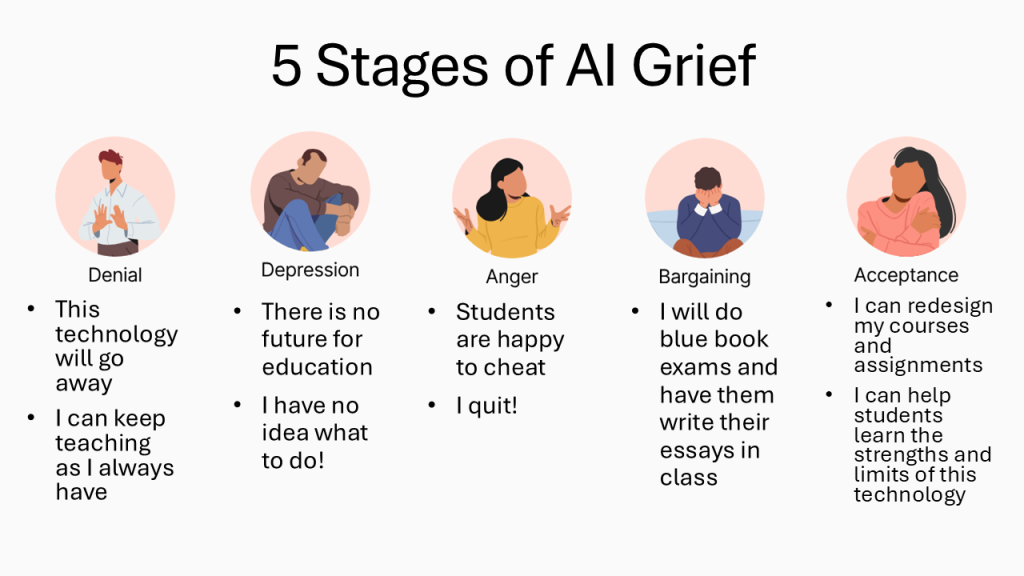

It strikes me that the best way to think about the process of faculty grappling with the new technology of LLMs (Large Language Models) is by analogy to the process of working through grief outlined by Elisabeth Kübler-Ross in her well-known five stages of grief.

Denial

The first stage is denial, and we have certainly seen that among faculty. I think that the attempt to present the technology as especially harmful to the environment resulted not only from a misunderstanding of the technology (what was involved in training LLMs versus what is involved in ordinary use of the resulting chatbots) and a failure to compare its impact with other technologies (such as Netflix usage), but more fundamentally was driven by a hope that it would be possible to make an ethical case against the technology itself such that it would be outlawed and cease to be a problem. Some just hope that it will be a flash in the pan, a passing fad. While there has definitely been hype from tech companies and there is a bubble that will burst in terms of the promises made about how adopting AI will enhance productivity and profits, that burst is unlikely to mean that the technology goes away, any more than the “dot com” bubble bursting meant that for the internet.

Depression

The second stage is depression. Educators who have said they feel like quitting surely exemplify this. I have at least heard some voices indicating that they were indeed quitting teaching, although I am not sure whether they in fact did so in the end.

Anger

The third stage is anger. If you don’t think faculty have been angry, visit the Professors subreddit on Reddit. This anger also issues in deep distrust for students, viewing them all with suspicion.

Bargaining

The bargaining stage appears to me to be what is happening when faculty say that they can just prohibit use in the classroom and give handwritten blue book exams. Now, that will work for some classes and will in some instances be a really good option. If you teach online, it isn’t an option at all. Jumping for the hyped promises offered by tech companies in the form of “AI detectors” is also a form of bargaining. Those products are snake oil. They produce false positives. There is no clarity about what features the “detectors” are using to determine that AI was used. With TurnItIn’s plagiarism detector, if you failed a student because of the percentage, ignoring the fact that some of the matching text was in quotation marks, or the title of a book, you would hopefully be reprimanded. Letting that tool decide for you is unethical. How much more unethical is it to use a tool that tells you that something was created by AI when you don’t know the basis, it has shown to be unreliable, and you are provided no data to allow you to render your own judgment?

Acceptance

The final stage is acceptance. When I refer to acceptance, it doesn’t mean acceptance of the hyped claims of tech companies. Most people who accept the loss that is the cause of their grief do not come to love and celebrate loss. That isn’t what acceptance means in this context. It is important to emphasize this, since there are some who imagine AI doing all sorts of things that are still pure imagination. Science fiction may become science fact, but the fact that we have speech-imitators does not mean that we have systems that can serve as consistently reliable tutors, counsellors, or whatever else. This post predicting trends for 2026 is just one example. Its suggestion that traditional educational skills and “forced breadth” are outmoded is radically at odds with what we are seeing in the world, where people participate in democratic processes with a lack of scientific, economic, political, and cultural literacy, as well as more generally a lack of critical thinking skills, and do great harm in the process.

Acceptance also doesn’t mean using AI for grading and letting students use it to produce assignments and carrying on as usual.

Acceptance means accepting that the technology is here to stay, just as happened with other new technologies from chalkboards (boy were those controversial initially!) to Wikipedia. That is what Ankur Gupta and I sought to do in our book Real Intelligence: Teaching in the Era of Generative AI. It is absolutely possible to redesign assignment and craft new ones that will work in the context of current technology. We did it with Wikipedia (or at least I hope you did) and we can do it for LLM-powered chatbots.

Teaching Students to be AI Savvy

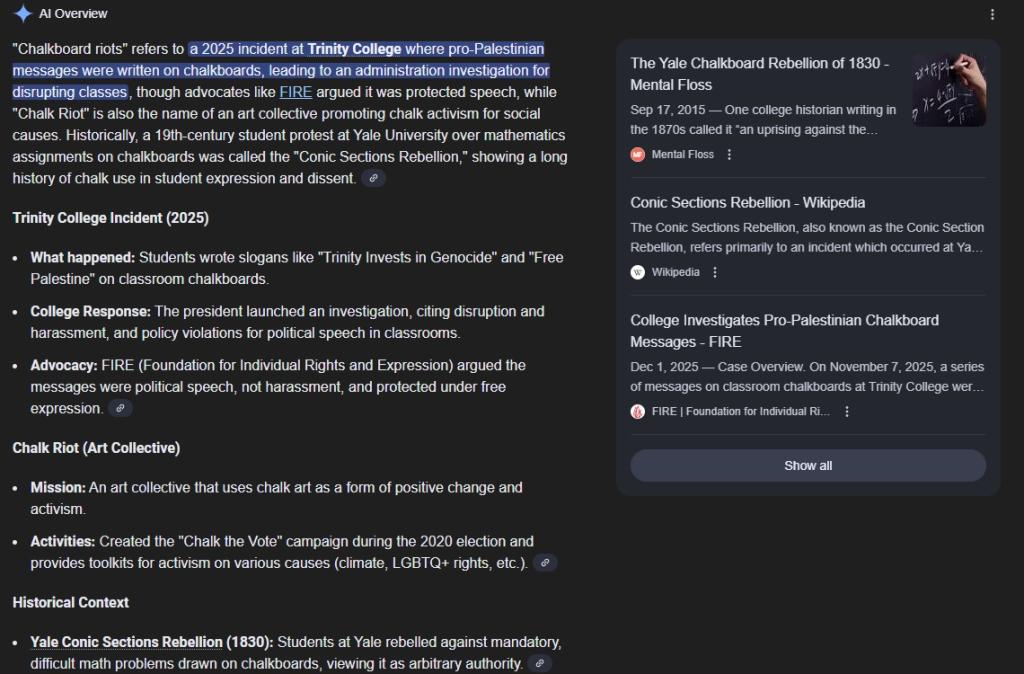

Another crucial thing to do is show students how hit or miss AI is at doing something that, if you understand the technology, it isn’t inherently capable of, namely providing information. Google’s AI previews offer lots of these. I wanted to link to the “Chalkboard Riots” (technically the “Conic Sections Rebellion”) that I mentioned and got something even better: this hodge-podge blurring of that event and more recent ones.

AI in the Context of Religious Studies

As another example, I was struck by the Christian Post advocating for an AI tool that is allegedly better for their variety of conservative Christian reader. It is called Freespoke, and the Christian Post site actually flagged it as sponsored advertising that may not reflect the values and views of that site. I nonetheless took a look. What I found was a site that aggregated news and sought to flag things that offered a viewpoint far from that of conservative Evangelicalism as “Left” so that readers would avoid them. The same news outlet would have a different rating depending on the article, suggesting that it was not just platform but content that was being filtered. The takeaway should be clear: AI can be used to reinforce biases, and never automatically eliminates them. Human programmers write the code and the programs work with human data.

There was also an article about AI pastors stealing attention away from real human ones. Not only is it possible to teach students about AI in and through religious studies courses, it is natural and indeed urgent to do so. For those of us who teach core curriculum courses, we can highlight that learning to cope with misinformation about religion in the era of AI teaches skills of media and information literacy that are directly applicable to medicine and other areas.

Conclusion

So what does that mean for educators passing through the five stages of AI grief? Accept that the technology is here to stay, and accept that as an educator you need to experimentexperiment with it, understand it, and help your students understand what will undoubtedly befall them if they rely on it uncritically. They need to be AI savvy, including a healthy dose of skepticism. As Lance Eaton writes:

With AI, yes, it will be interesting. But for all the hype, what it can actually deliver right now is still incredibly limited. A lot of the discourse is, “It’s interesting now, but just wait.” That’s been the story of AI for decades. With generative AI in particular, we’re already on year three of hearing that real artificial general intelligence is six months away.

There are serious questions about whether we can actually get there from here. When people talk about AI as transformational, a lot of that depends on moving it from here to there, and “there” keeps moving just a little farther away.

I agree that if we get there, it will change a lot of things. But there’s no guarantee that we can, at least not right now, especially when you factor in companies with billions of dollars shaping the narratives about how we talk about AI.

These same companies make comparisons of this moment to the Manhattan Project, one of the big technological gambles that paid off (sorta, given where we stand with nuclear arms and nuclear power). But there are lots of technologies that never took off, regardless of how promising they were going to be. Whether it was the Segway from Bezos or the Hyperloop from Musk, these were technologies that would change everything but have largely disappeared.

That’s the tension a lot of people in teaching and learning spaces, and in higher ed more broadly, are sitting with.

Talk to students about their views of AI. You will likely find that they want to be trusted and to trust you not to use AI inappropriately either. Help them understand how they are short changing themselves by misusing it, including relying on it uncritically, as though it were in fact intelligent. Explain where the term AI comes from and why it is a misnomer.

Use tools, from simple cloud storage folders for students to save work into (where you can see the revision history), to PISA Editor. The latter is a good start but I am sure that more robust tools for documenting the process of research and writing are still to come. There is a great opportunity for educators in any field to collaborate with colleagues in computer science to create tools that help ensure to the extent possible that students themselves engage in the assigned activities that are necessary for them to learn.

Because, as Sonja Drimmer and Christopher Nygren note, “Learning is not a problem to be solved; it’s a process to be undertaken.”

I felt like an infographic was called for and so made this about the Five Stages of AI Grief: