Continuing with my manuscript, now focusing on mathematics:

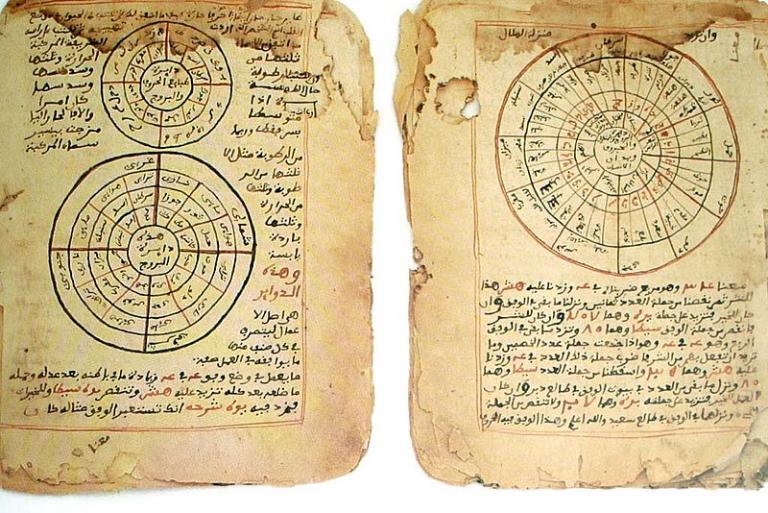

Arab Muslims had very practical reasons for their interest in mathematics. The calculation of the precise direction of the qibla (the direction of prayer to Mecca), something that was required for the proper orientation of mosques, relied upon rather sophisticated mathematical operations. So did the calculation of the exact date of the holy fasting month of Ramadan. (Being a lunar month, Ramadan moves through the seasons. Sometimes it occurs in winter, sometimes summer, sometimes fall or spring.)

The exact reckoning of inheritance according to detailed Qur’anic rules needed attention. And the hajj, the pilgrimage to Mecca, had tens or even hundreds of thousands of Muslims converging upon the holy cities of Arabia from all corners of the globe, by land and by sea. This was an operation that required navigational skills of the highest order.

However, unlike the ancient Egyptians before them, the Arabs did not limit their mathematical investigations to merely practical matters. Their work went far beyond utilitarian purposes. They made significant contributions, for example, to trigonometry and spherical geometry. It was an Arab, al-Khwarizmi (d. 850), who invented the technique of using letters and other non-numerical symbols to represent numerical values that we know as “algebra.” In fact, the very term “algebra” comes from the title of one of al-Khwarizmi’s books. He used the word al-jabr to mean `joining,” that is, “joining” mathematical quantities together in equations; it originally pertained to “joining” or “setting” broken bones. Al-Khwarizmi’s name itself shows up in Western mathematics, in a distorted form, as the term algorithm, which denotes a system of rules or a process for calculations. (Algorithms have become especially important in our age of electronic calculators and computers.)

It was also from al-Khwarizmi that the West learned of Arabic numerals. However, the transfer took a while to occur, since his work had to wait three centuries before being translated by an Englishman named Abelard of Bath. And it was only after considerable objection that Arabic numerals were finally adopted by Europe in the thirteenth century. Europeans found the Arabs’ notion of “zero” especially amusing. It was, they said, “a meaningless nothing.” Why have a symbol for nothing at all? But eventually even Europeans began to recognize that these numerals, and the idea of the place system that accompanied them, had certain advantages over Roman numerals. (Try, just as an experiment, to do long division or multiplication with “MDCCCXLVIII” and “CDXIV” instead of “1848” and “414”! You will soon see why the Europeans eventually gave up their resistance.) Today, our words cipher and decipher and even zero itself come from the Arabic term for “emptiness” or “nothingness,” sifr.