The rise of artificial intelligence technology is causing considerable widespread anxiety. What will the impact be on us and our children? Is the social fabric that holds us together beginning to unravel? What makes a human life worth living, and how is this unique, valuable, and distinct from what technology offers us? From “The Delta Framework”

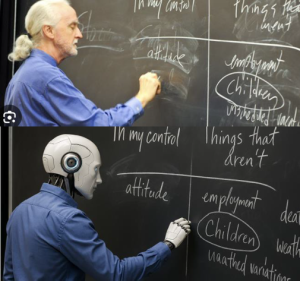

I team-taught two different colloquia this semester, “Apocalypse” and “We Can . . . But Should We?,” both of which intersected with issues related to Artificial Intelligence on a regular basis. During my final seminar with my “We Can . . .” students, the three students tasked with leading the discussion included this AI surprise in one of their PowerPoint slides:

“Wait a minute!” I complained. ‘Where the hell is the ponytail? Why does the robot’s shirt fit better than mine? Why is the robot’s handwriting better than mine? And what does ‘uaathed’ mean??” And, of course, as a Boomer I wanted to know “how did you do that?” Simple–get a picture of me from the Internet (this one’s from a talk I gave thirteen years ago–I’m still that good looking!), plug it into your favorite AI portal, instruct it to “make an AI image of this picture replacing the professor with a robot,” and voila! You have created my AI replacement who undoubtedly knows a lot more about Stoicism (the topic of the talk) than I do.

During the last week of “We Can . . . But Should We?” my teaching partner and I chose to reflect back on the semester through the lens of a specific moral framework. One of the assignments that week was to watch the video of a talk given by Dr. Meghan Sullivan, a philosophy professor at the University of Notre Dame, last September on the topic of “AI, Faith, and Human Flourishing.” It was the keynote address at a Notre Dame conference with the same name which “brought together a dynamic, ecumenical network of scholars, faith leaders, technologists, journalists, and policymakers who believe in the enduring relevance of Christian ethical thought in a world of powerful AI.” The talk begins at around 6:30.

Sullivan begins her talk by inviting us to “contemplate what it would mean for there to be a growth in conscience and ethics that is proportional to the astounding growth in digital technology that we are currently witnessing,” After the first successful test of a nuclear bomb, a commenter referenced the prophet Hosea from the Jewish scriptures: “They have sown the wind, and they shall reap the whirlwind.” In many ways, this same warning applies to artificial intelligence. We have created something incredibly useful and seducitve, while also dangerous and concerning, something that threatens to break free of any possible guardrails or limits we might devise.

The central focus of Sullivan’s talk is to ask how we should imagine ourselves as human beings in a world both saturated with and threatened by artificial intelligence. How do we preserve a sense of human virtue and flourishing going forward in a world in which many fear that what we believe to be most distinctive and important about being human–autonomy, free choice, intelligence, moral awareness–will become more and more irrelevant?

Persons of Christian faith need to ask what it means to live a faithful and viruous life, as well as what it means to serve the common good, in a world that has changed radically in a mere handful of years. What specific and unique contributions might persons of faith make to creating the future world that we are rushing toward? We can’t slow the technology down, so maybe we need to speed up the conversations about how to bring our faith forward.

Over the past two yeas, Sullivan and several colleagues have asked technology and faith leaders, journalists and policy makers, two questions. 1: What unique perspectives do you think the Christian community has to offer to the discussion of AI ethics that is currently unfolding? 2: What would it take to get those voices into the conversation, and what are the prospects for getting Christians to participate in this debate?

What might be the “ethical floor” in creating AI technology, what I call the “moral minimum” in my ethics classes? Interviewees, both secular and persons of faith, agree that this floor includes protecting right to privacy, safety, explainability, responsibility to users in key ways, and so on. But what might a moral framework that rises above the floor, the moral minimum, include? Christians in particular have a lot to contribute here. If the ethical floor is necessary but not sufficient, what are other indispensable parts of a moral framework for AI?

Sullivan’s insights resonate with me as a fellow philosopher who has taught introductory ethics courses at least 100 times over my career. The ethical floor for AI is compliance, obedience to rules that serve human interests in basic ways–something similar to what Immanuel Kant might endorse. On top of that foundation, Sullivan’s research advocates for a virtue ethics model, a ethical vision that includes human flourishing and the common good, features that rise far above mere obedience to rule-based duties. Developing technology, AI in this conversation, should serve these features rather than present obstacles to them in service to efficiency or productivity.

In specific, the framework Sullivan and her colleagues have developed goes by the acronym “DELTA,” described in the following brief video:

‘What makes a human life worth living, and how is this unique, valuable, and distinct from what technology offers us?” Here are five things to consider that will guide the ongoing conversation:

Dignity: Every human is valuable simply because they are human, not because of how smart or productive they are.

Embodiment: We are physical, vulnerable, social creatures. Mortality makes life precious. Virtual reality can never fully capture lived experience.

Love: Loving relationships involve two-way exchanges. AI tools and systems can simulate such relationships, but true flourishing requires real, messy human connection.

Transcendence: There are many beautiful and wonderful things that transcend human capacities, things that inspire awe and wonder. Not everything is reducible to our capacities.

Agency: Human flourishing requires the freedom and ability to make moral choices. We must protect decisions that only a human conscience should make against the tendency of technology to diminish these capacities.

Over my next several posts I will be considering each of the “DELTA” elements specifically, both looking back in the archives and looking forward in my discussion. Stay tuned!

Heads up! Three weeks from today, May 24, big changes will be coming to this blog. New format, new platform, and more! Stay tuned over the next three weeks for more information.