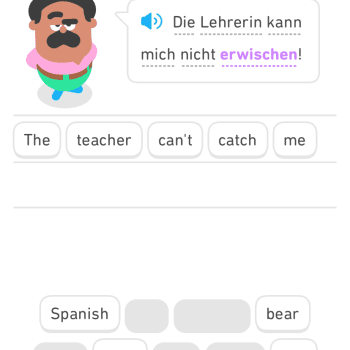

This article gets things exactly right. Digital skills cannot be either an “add-on” or the purview of computer science majors. And “digital natives” are faster with their thumbs but that isn’t the same as knowing how to discern reliable information on the internet they surf more fluidly and freely. From the article: “Today’s traditional-age students are digital natives. Google and Wi-Fi have been available for as long as they can remember; the first iPhone came out when they were in elementary school. But there’s a difference between familiarity and understanding. Quickly finding information online doesn’t mean you know how to evaluate its trustworthiness. Growing up using apps doesn’t mean you know how to build one. Some students are digitally savvy when they begin college. But others are not. How can a college ensure that all of its students graduate with the digital skills they will need to thrive in their careers and beyond?”

In my unit on Islam that I have been teaching, the hadith provided a great way to connect ancient and modern around the themes of reliability, fabrication, skepticism, trust, authority, and fact-checking. The title of this post reflects a combination of a longstanding interest of mine and a phrase that also reflects that interest, but in terminology that was new to me. I’ve long been aware that, even if carrying a phone connected to the internet does not make one a “cyborg” in the sense envisaged by sci-fi, it is still something closely related to those expectations. The post on the blog Only a Game that introduced me to the term had this to say:

The trouble with surrogate knowledge is that it gives us the feeling of ‘knowing the answer’ while robbing us of any actual competency. Worse, we can never be sure that what we are given is correct unless we already possess some knowledge of the subject and are merely ‘brushing up’ an answer…Surrogate knowledge is an oxymoron, a contradiction in terms. If you are merely repeating an assertion, you cannot claim to possess knowledge. Indeed, the crisis about what it means to know is the essence of our contemporary cultural catastrophe – a morass of misunderstandings now glossed under ‘fake news’ and ‘post-truth’ that marks the culmination of a disaster expertly foreshadowed by Nietzsche centuries before its impact was felt. We are cyborgs who, even now, trust in cybernetic networks to deliver answers they lack the knowledge to interpret, and still feel, undeservedly, that we know more than people in earlier eras, as if knowing more was akin to collecting stamps.

I mentioned in class the possibility of something like Google Glass allowing instantaneous fact-checking of things we see. But that depends on fact-checking websites and other services being trustworthy. Ultimately we cannot avoid the question of trust, whether we trust religious authorities, academic experts, the internet, or ourselves.

These technologies and how they relate to exam-taking and other classic educational practices is brought up here:

https://www.thetechedvocate.org/smartwatches-and-high-tech-cheating-in-education/

Also related to the topic of this blog post is this statement in a piece in Inside Higher Ed: “If you follow the right people and institutions, Twitter can function as a self-updating annotated bibliography.” See too:

https://thewayofimprovement.com/2019/11/05/sam-wineburg-demonstrates-historical-thinking/

Fact-checkers offer some hope in this review of a book about why humans are wrong about everything.

Business Insider highlighted Facebook’s inclusion of Breitbart as a source of high-quality journalism.

The Internet Archive took on sources that cite Wikipedia

From an article about statistics for views of fake news on Facebook: “False news reports that attack U.S. politicians have been viewed more than 150 million times on Facebook since the beginning of 2019, according to an analysis published Wednesday that points to a growing threat of deception swamping next year’s elections.”

The implications for publishers of changes Google has made

And finally a call for papers on the question of what information is.