It’s a week of tough questions about transhumanism. After reading my post on moral hazard for uploaded humans, Gilbert asked:

OK, so people value other people based on some kind of proximity function. In practice they instinctively use some evaluation procedure that generalizes badly to modern situations (where interactions can be more remote) and even worse* to uploads. Perhaps a bit like different polynomials may be functionally identical on some finite fields but not on others so that using the “wrong” one may be efficient for one domain put catastrophic for another one.

Going by your premises the solution seems obvious: Mass upload and then fix the bug.

That is, of course, unless patching healthy minds is somehow taboo. Which it is, for the same reason doing the same to the body is: Both are unnatural in the philosophical sense of the term. But if human nature wasn’t intrinsically worthy of respect, well then you would be better advised to chip away at reverence for the mind-as-it-is so we’d be more comfortable studying how it leads us astray and making any helpful and feasible modifications.

Once again, let me start with the very practical and then wander out to the philosophical. Being able to upload and/or simulate consciousness is no guarantee that we’d understand how it works or how to fix it. Geneticists can sequence the genes of various species and can even synthesize an entire genome, without knowing what any of the genes do or how you would alter their function. So the fact that humans minds could be run outside of conventional bodies wouldn’t mean we’d be able to have direct influence over their workings or that the any changes we could make would have very predictable effects.

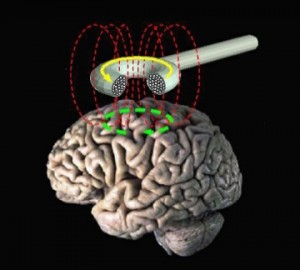

That caveat aside, I think Gilbert’s question raises some really interesting ethical dilemmas. You run into the expected problems of identity, but the uncertainty involved in altering the mind also brings up issues of medical ethics. How would you test a mind-modification that you thought might have the potential to do a lot of good?

As is standard procedure for risky but promising treatments, the mod could be offered to volunteers in the virtual world who were painfully deficient with regard to their moral instincts and wanted to be better (the equivalent of this guy from C.S. Lewis’s The Great Divorce). It might be tempting to just run computer models, but once you can adequately simulate human minds, that sounds a lot like creating people for the sole purpose of research and then destroying them.

The best option I can come up with is letting volunteers bifurcate. When they agreed to enter the trial, they’d duplicate their software/consciousness and make the change to one of the copies. (Fellow scientists reading this are already squeeing at the rigor of the control group set-up). But at the end of the experiment, what would you do?

Maybe both copies would want to go on as they are, but, if a change dramatically improved our lives, would the control group version of you want to merge with the augmented self? Is there anyway to talk about such a merge (or maybe the initial treatment, too) without it being the same thing as death?

I’m suspicious of any attempt to define a flaw as central to our own identity, but I don’t know how radical a change you can go through and still have any connection to your previous self. For example, I was pretty roundly disliked before I went to college and, in that environment, developed some pretty bad moral habits. I certainly wish that my future self will treat people more like people and less like prompts on a kind of morality SATs, but I don’t wish that Future!Leah would act differently because she had our past erased.

I’m suspicious I’m rebelling mainly against the way the change happens, not it’s magnitude, so I don’t know if I trust my revulsion. We applaud major identity shifts provided they take place over a long timespan, but I don’t see any great virtue in prolonging suffering and bad habits as an end-in-itself. Why shouldn’t the bifurcates subjects remerge and abandon the warped path one of them was stuck on?

How good would a change have to be in order to make it worth your while to subsume your self in a similar but different version? How confident are you that you would recognize the altered you as superior if it truly was?

Call it a fun sci-fi premise. Or a decent metaphor for conversion.