In a recent essay in The New Atlantis, “The Tech Backlash We Really Need,” L. M. Sacasas reviews the tech backlash resulting from our current digital disillusionment and calls for us to look beyond the more obvious gains and losses that come with new technologies.

If we were to resolve the issues raised by most technology critics—even more challenging concerns about individual autonomy and social equity—further questions would remain. Sacasas writes:

We fail to ask, on a more fundamental level, if there are limits appropriate to the human condition, a scale conducive to our flourishing as the sorts of creatures we are. Modern technology tends to encourage users to assume that such limits do not exist; indeed, it is often marketed as a means to transcend such limits. We find it hard to accept limits to what can or ought to be known, to the scale of the communities that will sustain abiding and satisfying relationships, or to the power that we can harness and wield over nature … we have convinced ourselves that prosperity and happiness lie in the direction of limitlessness.

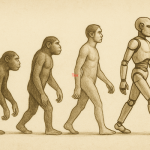

Part of what it means to be human is to have ambitions and abilities that enable us to transcend whatever limits we may encounter. In this, technology has been our augmenting aid from the beginning. Indeed, it is impossible to imagine the human condition without technology. With technology, we were able to move into stable social groups, cities, and inhospitable environments.

And yet, there are points at which transcendence becomes transgression—because there are limits to what we should be and do. Dave Eggers’s novel The Circle, set (as its epigram signals) in the unlimited future anticipated in East of Eden, reveals the comedic and tragic dimensions of technological temptations for omniscience, omnipresence, and omnipotence.

Existence in Eden, alternatively, depended on the paradox of creative restraint. In Packing My Library, Alberto Manguel speaks of the challenge “to reject the serpent’s temptation to aspire to be gods and also to reflect God’s creation … to accept that the limits of human creation are hopelessly unlike the limitless creation of God, and yet to strive continuously to attain those limits.”

In The Library at Night, Manguel reflects on two ancient images that signify the ambitions and limits of human technological innovation: the Tower of Babel and the Library of Alexandria. The building of the Tower, designed to reach the heavens, was halted by God. The destruction of the Library, which attempted to collect “all imagination and knowledge,” came through the actions or inactions of temporal rulers. The lessons learned from these failures remain ambiguous, and we continue to build more and better towers and libraries with varying degrees of success.

Manguel says that when the Weizmann Institute in Rehovot, Israel, built its first computer, the scholar of Jewish mysticism Gershom Scholem suggested it be named Golem Aleph. The Golem, a fantastic creature created by humans that is associated with protection as well as destruction, symbolizes the tension between creation and constraint. Manguel records that when Scholem spoke with Jorge Luis Borges about the Golem, he reminded Borges of Franz Kafka’s “unappealable dictum”: “If it had been possible to build the Tower of Babel without climbing it, it would have been allowed.”

There are limits we cannot transcend. A human being remains embodied and temporally bound, defined by a body and time, and one can enhance and extend oneself only so far—vision with glasses, speed with shoes, memory with records, communications and connections online—before encountering a physical or psychological limit. And there are moral limits we should not transgress. Our technologies should be designed to respect these.

And yet, the potential of who we can become and what we can do continues to evolve. Technologies—from Babel’s languages and Alexandria’s words to Golem Aleph’s digits— augment our autonomy and agency.

There are signs of hope that the current tech backlash will lead to larger questions about human dignity and flourishing. In their “Prolegomena to a White Paper on an Ethical Framework for a Good AI Society,” Josh Cowls and Luciano Floridi synthesize values appearing in five different statements on ethical principles for artificial intelligence. They find four core principles commonly used in bioethics—beneficence, non-maleficence, autonomy, and justice—and add a fifth, explicability. All of these include a focus on social innovation as well as technological innovation—an approach to technology that is scaled for humans.