As a Christian trained in sociology it’s sometimes difficult to “leave the statistics alone” when it appears in popular publications or sermons or adult Sunday school. Recently, several Christian news outlets noted a new survey finding, with bold titles like: “80% of Churchgoers Don’t Read Bible Daily.” Such a phrase is meant to provoke reading but the provocation is the interesting part. This claim assumes that Bible reading and churchgoing ought to go hand in hand, whereas survey findings suggest that this is not the case. This is an anecdotal example of what sociologist Mark Chaves argued regarding religious incongruity. His point was directed mainly at scholars and their work that makes this same assumption that religious people are supposed to be consistent in belief and behavior. In an earlier post, I showed that religious incongruity goes both ways: sometimes Asian American Christians appear less religious than expected, while Asian Americans with no religious affiliation can be surprisingly more religious than expected. Sometimes these expectations are built into surveys where questions are skipped based on a previous answer. This happened in the National Asian American Survey 2008 where those who reported “no religious affiliation” were not asked a question about church attendance.

The recent news reports about the incongruity of Bible reading and church attendance are based on a survey by LifeWay a publication arm of the Southern Baptist Convention. Since I read academic articles and books that use very rigorous methods, I used to wonder “where did that speaker or publication get those numbers?” when the figures reported didn’t comport with what I knew in the academic research world. Groups like LifeWay who have a much broader audience get the ear of practitioners where the academics often don’t get noticed.

There are several ways one could figure out the accuracy of a reported relationship. One is built on reputation: what survey firm conducted the survey? Some survey firms like the Gallup Organization have built a solid record of reliable studies, and that name-brand recognition comes at a premium. LifeWay doesn’t report who conducted the survey in their methodology page. All we do know is that they defined a “churchgoing Protestant” as a Protestant who attends church once a month or more. Another tactic then would be replication. I first turned to the General Social Surveys, the longest running, most rigorous, and most comprehensive omnibus survey on American attitudes and behaviors. Sadly I discovered that there were only a couple of times in the past 20+ years of this survey that the question of Bible reading appeared, and none of these were in the 2000s. So I turned instead to the Baylor Religion Survey 2010, a solid survey conducted by the Gallup Organization every few years. As one of the contributors to the design of the survey, I feel confident in the findings since we work carefully to ask the right questions, and we work closely with a well-established survey group that provides us with the survey data of a large random sample of Americans.

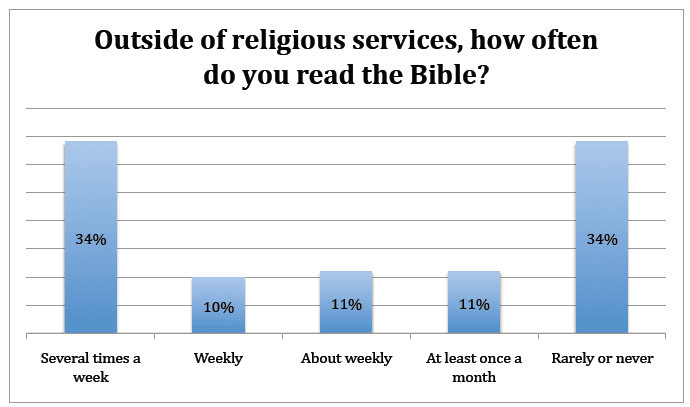

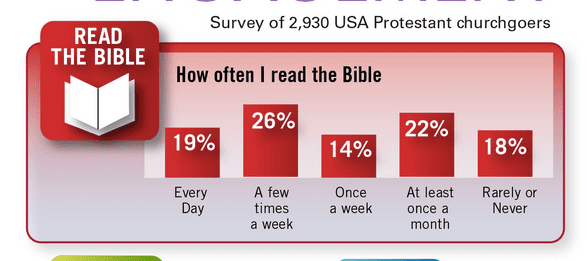

So here is a screenshot of the graph that LifeWay posted to illustrate the reported Bible reading rates of Protestants who attend church once a month or more:

As they state, about 19% of Protestants who attend church at least once a month read their Bible every day. About the same percent do not read much if at all. How does the Baylor Religion Survey compare?

As you can see, we can’t make exact comparisons since LifeWay didn’t design their survey using the standard approach in academic social science research on the question of Bible reading. Indeed their aims are different. But we can make some approximate equivalence to draw some comparability. In LifeWay’s sample, 45% of Protestant monthly+ attenders read the Bible at least a few times a week. The BRS figure is lower: 34%. At the other extreme, the LifeWay survey found that 18% of Protestant monthly+ attenders read the Bible “rarely or never.” The BRS figure is nearly twice as high, 34%. The middle categories run roughly similarly in both surveys between 36% and 32%.

What this suggests is that while LifeWay’s main concern was to show that active church-attending Protestants are not engaging in sacred Scripture reading enough, the BRS finding suggests that they should be even more alarmed. A much lower fraction of active Protestants are reading a lot, and much higher fraction don’t read at all. Yet both of these findings show more support for Chaves’ argument. We’re surprised at the low congruity between different behaviors that we assume go together in the life of the Protestant Christian. But perhaps the incongruity is the norm, and that the exception is when individuals or groups are remarkably consistent.

So this raises the question for both scholars and practitioners: if incongruity is normal, how should we restructure the way we model religious behavior, and how would religious groups alter their teaching and ministry with this foundational shift in their thinking?

Much gratitude to research assistant, Shelly Isaacs, for finding the LifeWay links and graphing work.