What to to do if someone you know behaves badly? Turn the other cheek, or take your revenge? According to Martin Nowak’s latest game-theory based analysis, turning the cheek is the strategy that’s most likely to reward you in the long run.

What to to do if someone you know behaves badly? Turn the other cheek, or take your revenge? According to Martin Nowak’s latest game-theory based analysis, turning the cheek is the strategy that’s most likely to reward you in the long run.

Nowak (Professor of Biology and of Mathematics at Harvard University) is interested in something called the repeated prisoner’s dilemma, a popular model of social interactions. In the game, you’re paired up with another person and have a choice of either co-operating (in which case your partner benefits but you don’t) or defecting (in which case you benefit but your partner loses). The payouts in the game are set such that it’s best if you both co-operate, but there is a strong incentive for you as an individual to cheat – if you can get away with it!

In a study published in early 2008, Nowak and colleagues looked at what happened if you were also allowed to punish defectors – this is so-called ‘costly punishment’, which costs you a bit but inflicts a greater cost on your victim. Now, you might think that strategies in which you can punish cheaters can bring them back into line, and so increase your payout.

In fact, that wasn’t what happened. They showed that people who were quicker to use punishment tended to lose out overall, and that the best results were achieved by people who responded to defectors simply by defecting themselves (i.e. refusing to co-operate).

In reality, life is a little more complicated than this simple two-way interaction. We’ve evolved to live in groups, and most of our interactions are with people who we’ve watched in action, and so we’ve formed an opinion of what they’re like. Reputation is important.

In a new paper, Nowak looks at whether the reputation you earn can make punishment a more effective strategy. This time they used a mathematical model to explore all the options, rather than real people.

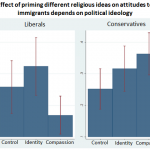

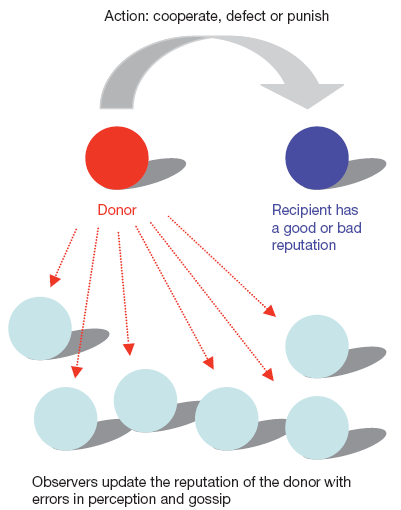

What the model assumes is that your actions are watched by a group of observers, who assign you a reputation according to your actions and whether the recipient of your action has either a good or a bad reputation (see figure above). They then treat you according to the opinion they’ve formed of you. It’s called ‘indirect reciprocity’, as opposed to the direct reciprocity of the two-person game.

To make the simulation realistic, they also assumed that the watchers could make mistakes about your reputation, and also that they talked to each other (they gossip!).

What they found was that if the watchers were poor in assessing your reputation, then defection was the best strategy (you might as well defect, since the watchers are pretty clueless). If they were good at it, then you should co-operate with good people and defect with bad.

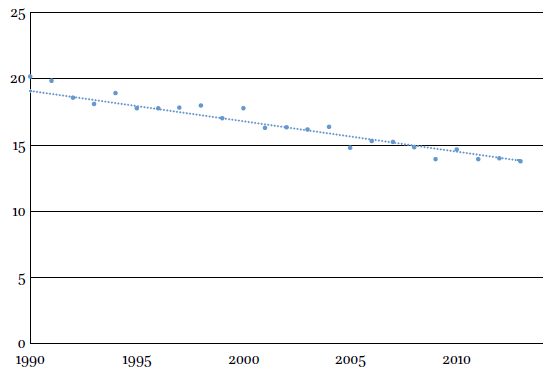

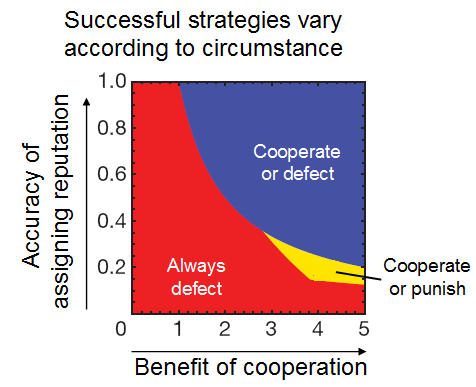

But there were hardly any circumstances in which you benefit from being in a group that believes in punishing those who are bad. You can get a flavour for what this means in practice from this figure, which shows how varying two of the parameters affects the best strategy.

You can get a flavour for what this means in practice from this figure, which shows how varying two of the parameters affects the best strategy.

If individuals are poor at assessing reputation, or if co-operation is not that beneficial, then the best strategy is to always defect. But if reputations are meaningful and co-operation is valuable, then you should co-operate with agents with a good reputation, and defect with those with a bad reputation (‘cooperate or defect’).

There’s only a very small patch (‘cooperate or punish’) when punishing those who are bad is the best strategy. It occurs when assigning reputation is pretty inaccurate, and the benefits of co-operation are very high.

Tweaking other parameters changes this landscape somewhat, but the general picture is always the same – there’s only a small window where ‘cooperate or punish’ is a good strategy.

So why did punishment as a strategy ever evolve? Well, this is a model, not reality. The agents are simple, and the model assumes that everyone has the same attitudes to crime and punishment.

But perhaps the reason that punishment is popular is not that it increases overall good, but that it brings fewer negative effects to the punisher than to everyone else. For example, punishment could evolve if it is a way of establishing social dominance. Nowak explains in his 2008 paper:

We conclude that costly punishment might have evolved for reasons other than promoting cooperation, such as coercing individuals into submission and establishing dominance hierarchies. Punishment might enable a group to exert control over individual behaviour. A stronger individual could use punishment to dominate weaker ones. People engage in conflicts and know that conflicts can carry costs. Costly punishment serves to escalate conflicts, not to moderate them. Costly punishment might force people to submit, but not to cooperate. It could be that costly punishment is beneficial in these other games, but the use of costly punishment in games of cooperation seems to be maladaptive. We have shown that in the framework of direct reciprocity, winners do not use costly punishment, whereas losers punish and perish.

In other words, the winners in costly punishment games don’t do well – they simply do less badly than everyone else.

Hisashi Ohtsuki, Yoh Iwasa, Martin A. Nowak (2009). Indirect reciprocity provides only a narrow margin of efficiency for costly punishment Nature, 457 (7225), 79-82 DOI: 10.1038/nature07601

Anna Dreber, David G. Rand, Drew Fudenberg, Martin A. Nowak (2008). Winners don’t punish Nature, 452 (7185), 348-351 DOI: 10.1038/nature06723