I was going to include “My Year With ChatGPT” at the end of my previous post, but in the end decided that that post was long enough and this should be a post of its own.

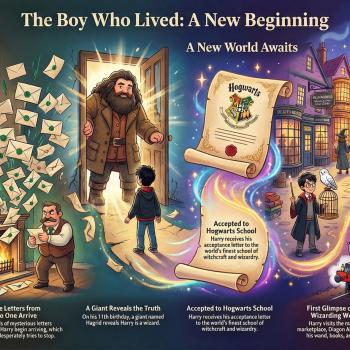

“My Year With ChatGPT” is a summary that that chatbot generates. Here are a couple of images that it produced:

Things like this are why there are people out there who genuinely feel understood by ChatGPT and consider it sentient and even a friend. What it does in instances like these is not fundamentally different from YouTube’s algorithm seeming to appreciate what kind of music and informational content I like. Given data and the ability to match patterns in it, an algorithm can appear to know you in the sense that another person does. Add the ability to play the game of human language and verbalize appropriately, and presto, you have a convincing imitation of genuine understanding, because the outputs are ones that were previously only possible through human understanding.

Imagine someone using this technology to generate anti-science content, and what the end-of-year compliments would be like, and you’ll grasp just how dangerous this technology can be.

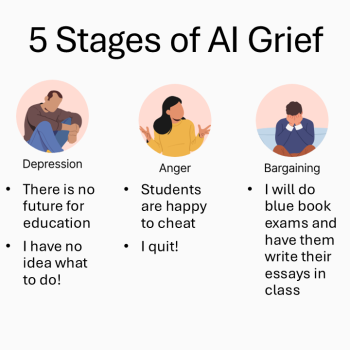

Yet it is also a powerful tool, and it isn’t going away. Educators must help students understand the risks and the limitations as well as how to utilize it in positive ways.

Will it eliminate students’ prospective jobs–or those of educators, for that matter? Since they imitate speech by algorithmic processes and do not understand in the sense that humans do, they will always make mistakes and generate answers that do not correspond to reality. If we assume that chatbots give accurate responses 92% of the time, for example, then the key question is whether and when that level of error will be acceptable to employers (especially when the chatbot does not require a salary comparable to that of a human employee). In some areas it may well be. In others absolutely not. Helping students (and educational institutions) understand and navigate this is crucial as we accept that AI is here to stay, and focus on preparing AI-savvy students who can use it when it is helpful, refrain from using it when it is inadvisable, and help their employers understand how to navigate these matters.

There are so many examples of the limitations of this technology, things that tech optimists might say are bugs they will eventually work out, but those who genuinely understand this technology know better. If you understand that all that LLMs do is imitate speech, then you’ll understand why they invent non-existent towns when reporting on weather. You’ll understand why relying on it for medical advice is a really terrible idea. You may use it, and I make no secret that I do. But you won’t say (as I heard someone say in a session today when a question came up to which they did not know the answer) “That sounds like one for your friend ChatGPT.” A chatbot is not a friend and is not a source of information. It might be a stepping stone to finding those things, but if you understand what it is and is not inherently capable of, you won’t refer to it as though it were in and of itself a source of information.