Adventures in Artificial Intelligence and Faith, H+ 2006

The Mind of Philip Hefner

Will God’s Created Co-Creator Create an AI Co-Creator?

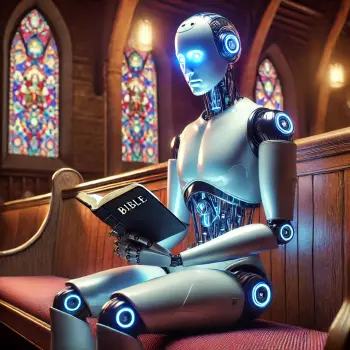

Will God’s Created Co-Creator create an AI co-creator? Will today’s generation give birth to descendants more intelligent than us? Will we give birth to artificial intelligent robots that will become more creative than we? Will we pass through a Singularity? Will we cross a threshold after which AI co-creators will take over the administration of our world and render us humans obsolete?

With the creation of “superhuman intelligence…the human era will be ended,” wrote science fiction writer Vernor Vinge in 1992. After we create AI co-creators, we Homo sapiens will become has-beens. Right?

Dare we ask questions such as the following? When we humans create an AI co-creator, will we become gods because of what we have created? Or, will our created AI co-creators–if they’re more intelligent than us–become the gods?

Is your head as dizzy as mine right now?

God’s Created Co-Creator

It was sockdolager Philip Hefner who gifted the field of systematic theology with the label for the human being: God’s Created Co-Creator. One salient dimension of the divine image (the imago Dei) within humanity, contends Hefner, is that we are creative. Oh, yes, creation from nothing (creatio ex nihilo) belongs to God’s provenance alone. Yet, we Homo sapiens sapiens engage with God in continuing creation (creatio continua). We are constantly transforming the physical world, making new things just like God does.

This capacity for technological transformation is God’s gift to us. Along with this gift comes a moral responsibility, namely, to create new technologies that enhance our opportunity to live lives that are healthy, wholesome, and healing. Reformed theologian Anna Case-Winters puts it this way: “There is an invitation to dynamic relationality in our role as co-creators. The imago Dei then is not only a gift but also a calling” (Case-Winters, “Knowing our Place”, 2022, 155).

This Patheos blog post in the field of public theology responds to two prompts. The first prompt is the celebration of a new book edited by Jason Roberts and Mladen Turk, Human Becoming in an Age of Science, Technology, and Faith. In this brand spank’n new volume, Philip Hefner contributes five essays that move the question of human creativity forward to the frontier of Artificial Intelligence. The book includes essays by other scholars as well, chapters that also move the conversation forward. Philip Hefner’s distinguished career in the field of Theology and Science deserves such a fine tribute.

The second prompt is the sudden surge of attention given widely to the prospects posed to us by Artificial Intelligence, Intelligence Amplification, robotics, and even Transhumanism. Some worry warts among us are concerned that the creation of an AI co-creator poses an existential threat to the human race as we’ve known it. Others are confident that, in principle, no robot will ever exhibit AGI (Artificial General Intelligence).

Perhaps AI and Faith, led by the able David Brenner, provides the most accessible online arena for this exploration and debate. In my series of Patheos posts, my interview with computer scientist and theologian Noreen Herzfeld would provide excellent background to what we will hear in a moment from Philip Hefner.

Meet Philip Hefner

It was while I was a graduate student in Chicago that I wandered into a seminar Philip Hefner was teaching on Martin Heidegger’s Sein und Zeit. At the time I didn’t give a rat’s derrière about what would become Phil’s passionate interest, namely, Theology and Science. That came later. We partnered in the early 1990s on a NIH grant to monitor the theological and ethical implications of the Human Genome Project.

For decades Philip Hefner taught systematic theology at the Lutheran School of Theology at Chicago. While there, Phil helped establish the Chicago Center for Religion and Science in 1988, later renamed the Zygon Center for Religion and Science. He edited one of the world’s leading journals in the field, Zygon, until 2008.

His theological term, created co-creator, first appeared in his contribution to the two volume work edited by Carl Braaten and Robert Jenson in 1984, Christian Dogmatics. It appeared again in an essay Phil wrote for a volume I edited in 1989, Cosmos as Creation. Phil’s most complete rendering of his theological anthropology appears in his 1993 monograph with Fortress Press, The Human Factor. In what follows we will draw out implications of God’s created co-creator for creating an AI co-creator.

In my own treatment of AI (Artificial Intelligence) in tandem with IA (Intelligence Amplification), I envisioned a fork in the road: either utopia or extinction? Professor Hefner’s assessment may be less dramatic, but he also sees positive and negative potentials.

2 Principles for creating an AI Co-Creator

Philip Hefner offers up two principles for us to keep in mind as the theologian assesses the prospects of creating an AI co-creator.

1st Principle: “The more perfectly robots serve human needs, the closer they become to interacting adequately with humans, the more like us they will become. They may not be human, but they will be functioning humans. As that line is crossed, the robots become like human creatures. The human-created co-creator will have created its own co-creator” (Hefner, “The Greatest Challenge: The Created Co-Creator Creates a Co-Creator” 2022, 70).

2nd Principle: “The more perfectly humans serve God’s purposes, the closer they come to interacting adequately with God, the more like God they will become. They may not be God, but they will be functioning as God. When that line is crossed, the humans become godlike creatures and God’s own co-creator” (Hefner, “The Greatest Challenge: The Created Co-Creator Creates a Co-Creator” 2022, 73).

Will superintelligent robots become gods? Will we become gods if we create gods? My mind is beginning to boggle. I wonder if asking AI could help at this point.

An Interview with Philip Hefner on Creating an AI Co-Creator

Question 1. We are all celebrating the publication of the new book, Human Becoming in an Age of Science, Technology and Faith, edited by Jason Roberts and Mladen Turk. This is a fine tribute to you, Phil, and to your advancement of theological scholarship. You contribute five new chapters on the Created Co-Creator. How do these chapters relate to the 40 years you’ve given to this topic?

Philip Hefner. I coined the term, created co-creator, in the early 1980s, in my essay on “Creation” in the Christian Dogmatics. I was charged with including the doctrines pertaining to humans (what we then called, “doctrine of man”) within the doctrine of creation. I was also charged with incorporating Christian tradition into my essay. The two-volume work was, so to speak, “bringing tradition up to date in a Lutheran key.” I don’t recall my thought processes at the time, but the result was that “created co-creator” was the term that could give contemporary expression to traditional thinking about humans.

My first elaborations bordered on simplicism, and I thought many of the responses, including some very negative ones, were off the mark. Holmes Rolston, for example, in a public lecture in my hearing at a conference in Amsterdam denounced the idea as a threat to the environment and urged his audience to reject it out of hand.

Over the years, I discovered ever-deeper layers of meaning in the idea of the created co-creator. I have written about the deeper meanings in the intervening years, but this new book presents my journey of exploration in much more detail. I hope the book shows a richer idea of created co-creator, pointing to even deeper levels of meaning.

Question 2. The human race today just might be on the brink of creating AI co-creators in the form of intelligent robots, post-human machine intelligence. You emphasize that robots will never become human, even if they function as human. What do you mean: artificially intelligent robots will function as human yet not be human?

Philip Hefner. It is much simpler to deal with whether robots function like humans, rather than whether they are human. First, we would have to agree on criteria for judging what is human—not an easy task. Then we would have to agree on what precisely a robot would have to do in order to qualify as human. This is akin to the Chinese Room problem in philosophy.

So much ink has been spilled in trying to determine whether a Turing machine can provide human responses. Now referred to as the Turing Test, it was originally called the “imitation game”—can robots imitate humans? These arguments would be so involved that we very likely would never answer the question of robots being human. Second, we know the frustratingly complicated philosophical discussions of the verb “to be” and verbs derived from it. If we are not so clear about the meaning of “is,” how can we agree on whether a robot is human?

It is more workable to deal with function. We know what we want the robot to do, so we set goals, keeping in mind criteria that we have formulated to assess the robot’s performance. Humans formulate the goals and criteria, but the robots do not necessarily perform in the same way humans do. Big Blue, the chess playing robot, and IBM’s Watson are examples. They meet goals set by humans, and their performance can be assessed. But they employ methods that are appropriate to robots, and not necessarily humans. Companion robots respond to emotions that human signal. While the robot responds to the signals—sometimes with remarkable accuracy—it does not experience human emotions.

My Lutheran denomination uses the slogan, “God’s work, our hands.” We do the work in the way humans work, not as God might work. And we don’t say that in doing this work, we are God. You can train a dog to go to the fridge and retrieve a cold beer—performing a human function—but the dog does not thereby become a human.

Question 3. You introduce the notion of the “demonic” when forecasting the future of AI and robotics. Why do you use the term, “demonic”? Why not say simply that robotic technology could be used for either good or evil purposes?

Philip Hefner. I follow Paul Tillich at this point. To say that robots can be used for good evil is banal. There is more to say. The “demonic” is the good turned against itself. The demonic manifests itself in many forms. Our opposable thumbs can become demonic in that although we are not evil persons, our juices of life can flow into evil. AI robots are not necessarily conceived in evil, but their functioning can be evil. There is a human-robot nexus at work here, just as there is a God-human nexus in our nature as created co-creators. In our God-given faith, we might single out an “enemy” for killing. Is God thereby accountable for our killing?

That is a good question. In any case our God-given capabilities can become demonic. So also, certain AI processes and capabilities are not conceived in evil, but they can be turned to killing people, perhaps as drones. Whether the creator of the robot is accountable for killing is a good question, too. Certainly the president or general who orders the killing shares accountability. The AI processes, however conceived, have become evil. Here we touch on the issue that Marge Piercy raised in her novel, He, She, It—are moral entities? Do we have moral obligations to our robots?

The term “demonic” opens up these deeper insights and questions.

Question 4. Phil, you may be unique among theologians in emphasizing that the technology we humans create will make us more godlike. How is this like or unlike the concept of theosis or deification familiar to Eastern Orthodox Christians?

Philip Hefner. I think I’m in over my head on this one. Since (I am convinced) technology is intrinsic to who we are in the present age, it certainly must have a place in our relation to God. It is inherent to the processes of our sanctification and hence, theosis. It would be intriguing to spell this out. I did do some of this in two little books, Technology and Human Becoming and Religion-and-Science as Spiritual Quest for Meaning.

Question 5. What else would you like to say at this point?

Philip Hefner. My work may be considered an odd fit for the Public Theology genre. I believe that I have devoted much of my work to discussing themes that are publicly relevant. I have argued that my theological concepts could be “secularized” by removing their explicit God-talk—similar to what some secularists did to Reinhold Niebuhr. This was my attempt to speak to a wider public. I follow Tillich’s principle that the gospel responds to a culture’s existential questions. I believe that today we are asking questions prompted by the increasingly naturalistic worldview that has taken hold in our times. Questions such as Does evolution have meaning and purpose? Are we humans nothing more than a collection of molecules and genes? The idea that we are God’s created co-creators may be a credible Gospel response to such existential questions that our culture raises.

The AI Co-Creator: who gets to be the god?

Here’s what we hear from AI optimists among our transhumanist friends: after the singularity in which AI co-creators take over, “an entirely new species of gods will exist”(Braxton, 2021, 8). Will we humans have created a new set of gods?

There’s a difference, I think, between being a god and being godlike. When public systematic theologians employ the term, imago Dei, they intend to say we are godlike. On the one hand, we are born godlike with our inborn divine image. On the other hand, to become godlike is a process of sanctification in which we become increasingly loving and increasingly saintly. In Orthodoxy, this sanctified godlikeness is called deification or theosis. “Deification does not transform us into independent deities but rather frees us from our pretensions to autonomy so that we may participate in the blessed, communal life of the triune God,” writes Ian Curran (Curran, “Becoming godlike,” 2017, 8).

In our Patheos seriees on transhumanism (see list below), we asked this question: could AI or IA speed us up the track of theosis toward maximum godlikeness? We answered in the negative. Why? Because becoming sanctified requires the consent of a Spirit-infused free will, something neither AI nor IA could deliver. We must conclude that robotic technology is not likely to enhance our human godlikeness, at least in this respect

In the theologically uninformed arrogance of transhumanists, AI or IA enhanced humans are said to become gods in the sense that future humans will have more power than today’s species. In this case, divinity simply means power.

There is much more to divinity than merely power, as Christian transhumanists know well.

Interpreted in light of Philip Hefner’s notion of the Created Co-Creator, we might explore divinity-is-more-than-power matter by asking a more nuanced question about the role of creativity in godlikeness. Could we create AI co-creators that would surpass our generation of technological innovators? Would creating AI co-creators make Homo sapiens gods in the sense that the real God is God? No. Because we would still be transforming what already exists rather than creating ex nihilo, from nothing. The true God is at no risk of losing any uniqueness, let alone divinity.

The responsibility of the public theologian in the controversy over the future of Artificial Intelligence is to caution against speculative overreach. After celebrating the advances in computer and robotic technologies over the last half century, it’s quite easy to take a leap in our imaginations to a future techno-utopia that leaves behind all the ugliness of the human past. The public theologian today, like Reinhold Niebuhr and Paul Tillich of yesterday, should point out that no amount of technological innovation can cure the human race of original sin. Only grace from the true God can do that.

▓

Ted Peters pursues Public Theology at the intersection of science, religion, ethics, and public policy. Peters is an emeritus professor at the Graduate Theological Union, where he co-edits the journal, Theology and Science, on behalf of the Center for Theology and the Natural Sciences, in Berkeley, California, USA. His book, God in Cosmic History, traces the rise of the Axial religions 2500 years ago. He previously authored Playing God? Genetic Determinism and Human Freedom? (Routledge, 2nd ed., 2002) as well as Science, Theology, and Ethics (Ashgate 2003). He is editor of AI and IA: Utopia or Extinction? (ATF 2019). Along with Arvin Gouw and Brian Patrick Green, he co-edited the new book, Religious Transhumanism and Its Critics hot off the press (Roman and Littlefield/Lexington, 2022). Soon he will publish The Voice of Public Theology (ATF 2022). See his website: TedsTimelyTake.com. His fictional spy thriller, Cyrus Twelve, follows the twists and turns of a transhumanist plot.

▓

Other Patheos Resources on AI, IA, and Transhumanism

Is AI a shortcut to virtue? To holiness?

The Transhuman, The Posthuman, and the Truly Human

Radical Life Extension? Cybernetic Immortality? Or Resurrection of the Body?

Religious Transhumanism 4: Evangelical? Yes

Religious Transhumanism 5: Mormon? Yes

Religious Transhumanism 6: Jewish? No

Religious Transhumanism 7: Buddhist? Yes

Religious Transhumanism 8: UU? Yes

Christian Transhumanism versus Transhumanist Christianity

Religious Transhumanism 10: Transhumanism vs Posthumanism

Religious Transhumanism 11: What about the body?

Religious Transhumanism 12: Catholic?

Religious Transhumanism 13: Methodist?

Religious Transhumanism 14: Scientific?

Religious Transhumanism 15: Lutheran?

Bibliography

Braxton, Donald, 2021. “Religion Promises but Science Delivers: The Transhumanist Wager.” The Fourth R: Westar Institute 34:3 (May-June) 3-9.

Case-Winters, Anna, 2022. “Knowing Our Place: In the Image of God, at Home in the Cosmos,” Human Becoming in an Age of Science, Technology, and Faith: Philip Hefner. eds., Jason P. Roberts and Mladen Turk. Lanham MA: Lexington; 153-170.

Curran, Ian, 2017. “Becoming godlike? The Incarnation and the Challenge of Transhumanism.” The Christian Century 134:24 (November 22) 22-25.

Hefner, Philip, 2022. “Created to Be a Creator,” Human Becoming in an Age of Science, Technology, and Faith: Philip Hefner. eds., Jason P. Roberts and Mladen Turk. Lanham MA: Lexington; 9-22.

Hefner, Philip, 2022. “Created Co-Creator,” Human Becoming in an Age of Science, Technology, and Faith: Philip Hefner. eds., Jason P. Roberts and Mladen Turk. Lanham MA: Lexington; 55-68.

Hefner, Philip, 2022. “The Greatest Challenge: The Created Co-Creator Creates a Co-Creator,” Human Becoming in an Age of Science, Technology, and Faith: Philip Hefner. eds., Jason P. Roberts and Mladen Turk. Lanham MA: Lexington; 69-77.

Peters, Ted, 2022. “The Crisis of Technological Civilization,” Human Becoming in an Age of Science, Technology, and Faith: Philip Hefner. eds., Jason P. Roberts and Mladen Turk. Lanham MA: Lexington; 141-152.