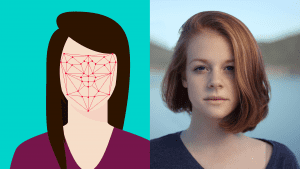

I’m currently writing a doctoral thesis in moral theology on the topic of privacy. A question arose in the Democratic primaries around whether police should be allowed to use facial recognition software.

Vox wrote an interesting piece on this software, focusing on how this software can have embedded biases. I am more concerned about privacy and thus concerned about its use by both private companies or the government.

The Current Debate over Facial Recognition Software

Vox reported on this:

In August, Sen. Bernie Sanders became the first presidential candidate to call for a total ban on the use of facial recognition software for policing. As part of his broader criminal justice reform plan, he also called for a moratorium on the use of algorithmic risk assessment tools that aim to predict which criminals will reoffend. […]

Some find this state of affairs disappointing, especially given that the public pushback against facial recognition is gaining momentum. The California Senate passed a bill this week placing a three-year moratorium on the use of facial recognition in police body cameras. State legislatures in New York, Michigan, and Massachusetts are also considering bills to rein in the technology. Bipartisan legislation is expected to be forthcoming in Congress.

“Facial recognition poses a unique threat to human liberty and basic rights — any candidate who wants to be taken seriously on criminal justice issues should be calling for an outright ban, or at the very least a moratorium on current use of this tech…” said Evan Greer, deputy director of the digital rights nonprofit Fight for the Future. […]

Sanders says he won’t allow the criminal justice system to go on using algorithmic tools for predicting recidivism until they pass an audit, because “we must ensure these tools do not have any implicit biases that lead to unjust or excessive sentences.” […]

Woodrow Hartzog and Evan Selinger, a law professor and a philosophy professor, respectively, argued last year that facial recognition tech is inherently damaging to our social fabric. “The mere existence of facial recognition systems, which are often invisible, harms civil liberties, because people will act differently if they suspect they’re being surveilled,” they wrote. The worry is that there’ll be a chilling effect on freedom of speech, assembly, and religion.

The authors also note that our faces are something we can’t change (at least not without surgery), that they’re central to our identity, and that they’re all too easily captured from a distance (unlike fingerprints or iris scans). If we don’t ban facial recognition before it becomes more entrenched, they argue, “people won’t know what it’s like to be in public without being automatically identified, profiled, and potentially exploited.” […]

This is not a far-off hypothetical but a current reality: China is already using facial recognition to track Uighur Muslims based on their appearance, in a system the New York Times has dubbed “automated racism.” That system makes it easier for China to round up Uighurs and detain them in internment camps.

I have just grabbed a few lines if you want to read the whole, rather lengthy, piece.

Moral Analysis of Privacy & Facial Recognition

I think Bernie Sanders is onto something here. I think this technology is fraught with ethical issues regarding privacy and the right to one’s good name.

It may prevent some crime or catch some criminals. A man decides against shoplifting as he knows he will be caught as the store security camera will match his face against a universal database. Or, a criminal on the run get’s caught as he walked by a corner where a police camera uses facial recognition. These are undoubtedly good things. However, are they worth the cost?

In cities, there is already the sense that once you leave your house, every area you are in probably has a security camera recording somewhere. In some parts of cities, police have cameras on almost every corner. However, once these cameras are connected and with facial recognition technology, none of us would be able to go for a cup of coffee with a friend without that becoming part of the public record. If you are caught on camera publicly intoxicated, even if a friend drives you home, you might get a ticket in the mail.

A year ago, Sen. Durbin asked Mark Zuckerburg a few questions about privacy. One was: “Would you be comfortable sharing with us the name of the hotel you stayed in last night?” Obviously, even Zuckerburg was not comfortable sharing this. We all want a certain amount of privacy over what we do, but with wide use of facial recognition, this seems no longer possible.

We all understand the problem with the social credit score the Chinese government is using. The Vox article even noted how it includes institutional racism against an ethnic minority group in the country. But even beyond that, restricting trains to those who are on the government’s “good list” seems oppressive. I don’t think anything like that is likely by western governments anytime soon, but if we aren’t careful about how we use facial recognition, this technology could create a certain dystopia. Imagine if when we do a eucharistic procession through downtown, all of that is logged in a government computer forever. That might scare some people away, and most of us probably don’t want that on a government computer for 30 years.

Maybe in the future, we can find limited use of facial recognition software by governments, but we need a ban now to make sure we get it right. Once we have a database of everyone’s face and your every step through downtown for a decade is stored on a computer it will be in large part too late.

Note: If you want more on privacy from a Catholic perspective, please support me on Patreon.