Bob Seidensticker has looked over my recent post on objective morality and hard to get at truths, and he’s got some more questions. Let me pull out a couple quotes from Bob’s post:

I’ll agree that there’s nothing absolute for the consensus to be truth about. When we say, “Capital punishment is wrong,” there is no absolute truth (the yardstick) for us to compare our claim against. Is capital punishment wrong? We can wrestle with this issue the only way we ever have, by studying the issue and arguing with each other in various ways, but we have no way to resolve the question once and for all by appealing to an absolute standard.

…As for “arbitrary,” my morals may be arbitrary in an absolute sense, but of course they don’t feel arbitrary in a throwing-darts-at-a-list-of-possibilities sort of way. I consult my conscience with moral questions, and it gives me answers. No need for an external anything. (If you say, “Wait—where did that conscience come from? Didn’t that get put in there by an external agent?” then I point to evolution as the source.)

What I don’t understand is why Bob sees his conscience as worth listening to. There’s a very boring possible answer to that question (“It’s uncomfortable when I don’t! I dislike the queasy feelings that tend to result, so I’m conditioned to follow my conscience’s promptings!), that I hope he won’t give. For one thing, that answer doesn’t give much of a reason to pass up wireheading or soma (typical error checks for hedonic philosophies). But, if this is his answer, Bob should be prepared to recognize his conscience as his enemy, like Spike’s chip was on Buffy.

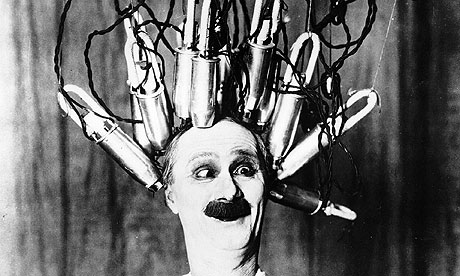

We’re getting closer to be able to tweak brain chemistry through implants and magnetic stimulation. It’s not too science fictional to imagine Mad Scientist!Me deciding to put some new constraints on Bob’s desires. Can I make any wrong changes, such that Bob should want to pull out the implant? Is there such a thing as brainhacking malpractice, if the patient is prepared to accept whatever sort of conscience seems to speak to him?

Let me give you another thought experiment. Now, I’m not a Mad Brain Scientist, I’m a Mad Computer Scientist. I’ve put together some kind of computer program and I present it to you as a black box — you don’t get to see how it works, but you can give it different inputs and see what it outputs.

“Hey, Bob,” I say. “I’ve got a pretty nifty computer program here. It can give you advice about what to do when you’re not sure about a moral problem. In long-duration clinical trials conducted here in the present, people who did what the black box told them whenever they asked it a question were more likely to have children than people who ignored the black box’s advice, people who weren’t given a copy of my black box, and people who were just given a magic eight ball hidden in a black box. (I had a devil of a time getting an IRB to approve all those control groups, but I wanted to be thorough). Would you like a black box of your own?”

I’m not sure why Bob should turn me down. The box I’m offering him is optimized according to pretty similar criteria as the conscience he trusts because it was shaped by evolution. In fact, my box is probably better optimized than his conscience, since it’s more closely tailored to the world where Bob actually lives, instead of the ancestral environment that shaped slow-moving evolution. And go ahead and assume that I’m telling the truth, my studies are valid, and my box really does tend to improve the reproductive fitness of Bob-type people by a significant margin.

I’d say I didn’t have enough data to accept the offer. There are a number of ways to be strong and fit, and some of them are awful. And, looking back at his post, it looks like Bob knows that. He writes:

There’s evidence that evolution built us to think that rape and slavery are okay, as long as you’re on the giving end. We see this attitude in the Old Testament, for example. However, modern society teaches us something different. This is the instinctive moral sense being overridden by the societal moral sense. Sam Harris writes about this in his The Moral Landscape. As I understand this, he argues for an objective morality of the second kind—one that we can hone with science and reason.

Here I agree with Leah that we aren’t stuck with our evolutionary programming. We can and do rise above our instincts.

“Rise above” presupposes some dimension of height. “Hone” implies some form that we’re getting closer to by paring away extraneous material. If you have a sense that more is possible, then you must have some expectation that an external standard exists, and that you have some kind of access to it (even if it’s as limited as our access to physical laws, which we have to painstakingly deduce). But, if you’re looking for a guide, a conscience formed by evolution is no more trustworthy than my black box.